AI News Today (January 6, 2026): Explore Top 9 AI Updates & Breakthroughs

Jan 6, 2026: The tech world has officially descended upon Las Vegas for CES 2026, and if the first 24 hours are any indication, we are no longer just talking about chatbots. We are entering the era of Physical AI where intelligence moves out of the screen and into the gears, chips, and fabric of our daily lives.

From massive corporate consolidations to robots that can finally understand a “vague” human request, today, January 6, 2026, marks a definitive shift in the trajectory of the decade.

This edition of AI News Today (Jan 6, 2026 ) covers the most important developments shaping the AI ecosystem right now and what they mean for businesses, developers, and everyday users.

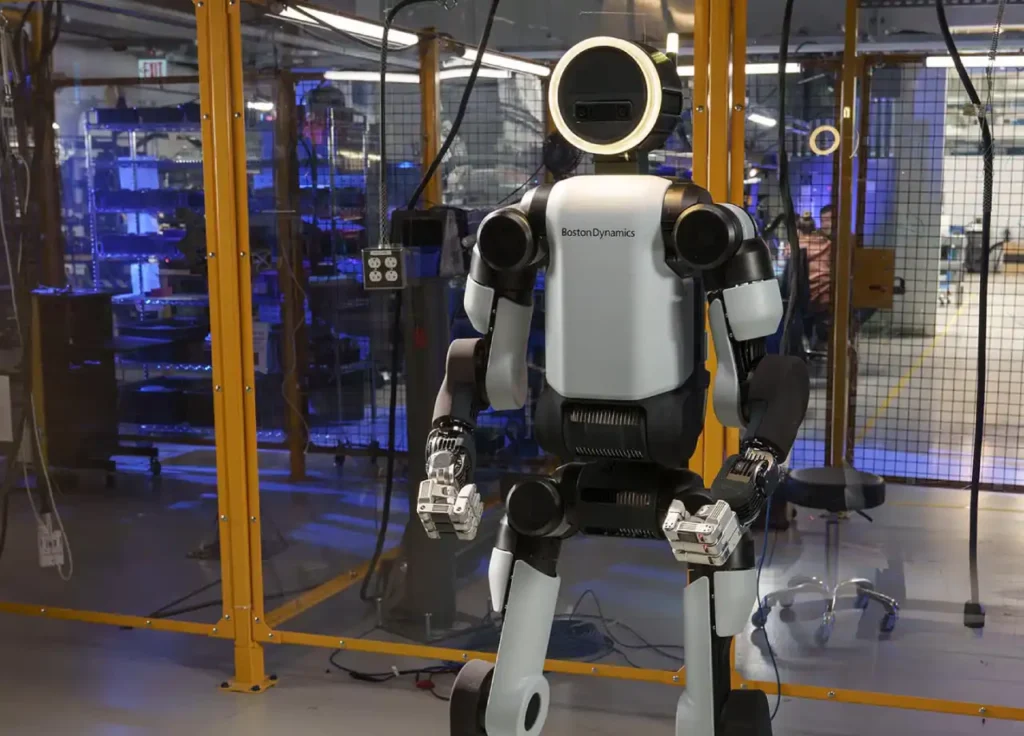

1. Google DeepMind with Boston Dynamics

Google DeepMind and Boston Dynamics have announced their integration of Gemini-class multimodal models into the new electric Atlas humanoid. This partnership is considered the most significant robotics collaboration of the 2020s.

Atlas, once a marvel of physics, previously lacked “common sense.” The recent unveiling showcased an Atlas unit responding to complex, unscripted commands, such as “Clean up the spill, but don’t use the good towels.” By merging DeepMind’s advanced reasoning capabilities with Boston Dynamics’ mobility, the “brain-body” gap in robotics has been bridged. The new Atlas will be capable of:

- Adapting to changing workflows without reprogramming

- Understanding natural language commands

- Interpreting its physical surroundings in real time

This move reflects DeepMind’s long-term vision of embodied intelligence, where AI systems learn by interacting with the physical world rather than just processing text or images.

2. NVIDIA Unveils the “Vera Rubin” Platform

At CES 2026, Jensen Huang introduced the successor to the Blackwell architecture: the Vera Rubin platform. Named after the pioneering astronomer, this new AI superchip is engineered for “Agentic AI.” This includes autonomous systems capable of planning and executing multi-step tasks over extended periods without human involvement.

According to NVIDIA, Vera Rubin delivers:

- Massive training throughput for large AI models

- Improved energy efficiency and reduced resource usage

- Optimized performance for multi-agent AI architectures

The Rubin chips offer a 4x increase in energy efficiency for inference. This addresses the global concern over the high power consumption of data centers. NVIDIA is now marketing the “operating system for the physical world.”

3. Intel’s “Panther Lake” and the 18A Milestone

While NVIDIA dominates the data center, Intel is reclaiming the personal computer market. The official launch of the Core Ultra Series 3 (Panther Lake) processors marked a historic moment for the company, as it is the first mass-market chip built on the Intel 18A process node.

These AI-focused chips are designed for:

- Consumer laptops

- Robotics platforms

- Edge AI applications

- On-device AI inference

These chips are designed to make “Cloud AI” a secondary option. With an NPU (Neural Processing Unit) capable of running large local models, Panther Lake allows users to run personalized AI assistants offline. This offers enhanced privacy and speed compared to previous capabilities.

4. Accenture’s Strategic Acquisition of Faculty

Consolidation within the AI services sector escalated as Accenture announced its intent to acquire Faculty, a London-based AI company known for its “decision intelligence” platforms.

This acquisition strengthens Accenture’s ability to help organizations:

- Build AI systems that support strategic decisions

- Apply AI to high-stakes business and government use cases

- Scale AI adoption beyond pilots into core operations

As enterprises move beyond the “experimentation phase” of Generative AI, they are focusing on ROI. Faculty’s experience in applying AI to high-stakes decision-making makes this a key acquisition for Accenture. The acquisition is part of the company’s plan to lead the $1 trillion AI consulting market.

5. The Luxury Robotaxi: Uber, Lucid, and Nuro

The ride-sharing landscape changed with the debut of a three-way collaboration between Uber, Lucid Motors, and Nuro. They unveiled a purpose-built, autonomous luxury vehicle designed for Uber’s premium tiers at CES.

Currently being tested in San Francisco, the vehicle features:

- Fully autonomous driving capabilities

- An AI-driven in-cabin experience

- Personalized entertainment, climate, and route preferences

- Advanced safety and situational awareness system

Unlike previous autonomous vehicles, this vehicle features an AI-curated cabin. It adjusts lighting, temperature, and “digital window scenery” based on the rider’s biometric stress levels. Testing is currently active in San Francisco, indicating the “autonomous era” is entering the luxury consumer market.

6. Marvell Expands the AI Data Center with XConn

In the “plumbing” of AI, connectivity is crucial. Marvell Technology announced its acquisition of XConn Technologies, a leader in CXL (Compute Express Link) switching.

As AI models grow to trillions of parameters, data transfer speeds between chips can become a bottleneck. By acquiring XConn, Marvell is positioning itself as an essential architect of the “back-end” of AI. This will ensure that the massive clusters used by OpenAI and Meta can function as a single, seamless system.

7. LG’s “CLOiD”: The Home Robot Finally Arrives

Home robots were previously limited to vacuum cleaners. LG Electronics introduced CLOiD, a companion robot that uses “Physical AI” to navigate the complexities of a home.

CLOiD is built to:

- Navigate homes autonomously

- Understand contextual instructions

- Perform tasks like cleaning, object delivery, and monitoring

Using a multimodal vision system, CLOiD can identify laundry, sort recycling, and assist elderly users with mobility. It represents a shift from “functional” robotics to “empathetic” robotics. These machines understand the context of a household.

8. Synopsys and the “Digital Twin” of the Auto Industry

Building a car traditionally takes five years. Synopsys announced new AI-driven Virtualizer Developer Kits. These kits allow automakers to simulate every electronic component of a car before manufacturing.

These kits enable:

- Faster development cycles

- Reduced testing costs

- Early detection of software and hardware issues

- Improved safety and reliability

By partnering with NXP and Texas Instruments, Synopsys claims they can reduce the development cycle for software-defined vehicles by 12 months. This is “AI-driven engineering,” using silicon to design the future of transportation.

9. Lego’s “Smart Play” Platform: AI in the Toy Box

Lego demonstrated that AI isn’t just for data scientists. They unveiled the Lego Smart Brick, a 2×4 stud brick with a micro-camera and a low-power AI chip.

These smart bricks can:

- Detect movement and orientation

- Communicate with other bricks

- Respond to user interactions

- Enable programmable, interactive creations

Children can build models that “recognize” each other. A Lego dragon can now “see” a Lego knight and react with light and sound effects. It’s an application of “Edge AI,” bringing computer vision to a price point and form factor accessible to children.

The Big Picture: What Does This Mean?

The news from January 6, 2026, highlights the transition from “Generative AI” (creating text and images) to the era of “Applied Agentic AI” (making things happen in the real world).

Whether through NVIDIA’s Rubin chips, Intel‘s technology, or Boston Dynamics‘ robotics, “Agentic AI” is becoming more integrated.

As CES 2026 continues, the focus shifts from what AI can do to how these autonomous agents will be integrated into society. From children’s toys to autonomous vehicles, AI is evolving from a tool into a collaborator.